Oracle Autonomous Transaction Processing delivers a self-driving, self-securing, self-repairing database service that can instantly scale to meet demands of mission critical transaction processing and mixed workload applications. When you create an Autonomous Transaction Processing database, you can deploy it to one of two infrastructure platforms:

- Serverless, a simple and elastic deployment choice. Oracle autonomously operates all aspects of the database life cycle from database placement to backup and updates.

- Dedicated, a private cloud in public cloud deployment choice. A completely dedicated compute, storage, network and database service for only a single tenant. Dedicated deployment provides for the highest levels of security isolation and governance. The customer has customizable operational policies to guide Autonomous Operations for workload placement, workload optimization, update scheduling, availability level, over provisioning and peak usage.

Customer’s requirement

Customer in house developed Java Spring boot Workflow application needed to have following requirements on their live environment. In that case, Oracle Autonomous Transaction processing database met those requirements and our Database administration team worked on this to migrate the current workloads to Oracle ATP database.

The features which customer preferred in ATP are following. With the lower cost, High availability and performance was the key specializations for customer to go with ATP.

Highly Available

- Recovers automatically from any failure

- 99.995 percent uptime including maintenance, guaranteed

- Elastically scales compute or storage as needed with no downtime

Industry-Leading Performance

- Integrated machine-learning algorithms drive automatic caching, adaptive indexing, advanced compression, and optimized cloud data-loading to deliver unrivaled performance

- Automatic adaptive performance tuning delivers faster analytics

Secured

- Administers security automatically with self-patch and self-updates with no down time

- All data is automatically secured with strong encryption, turned on by default

- Access is monitored and controlled, protecting from external attacks and unauthorized internal access

Lower Cost

- Expand/shrink compute and storage independently without costly downtime

- No overpaying for partially used, fixed configurations

- Make the move to Oracle Cloud and cut your Amazon bill in half—guaranteed

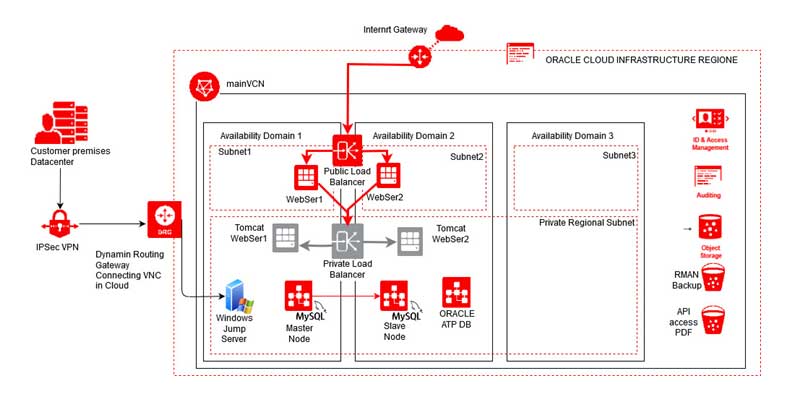

Architecture of the Oracle Cloud HA environment

When it comes to achieve High availability in Oracle Cloud Gen 2 Environment, following components we used.

A region is a localized geographic area, and an availability domain is one or more data centers located within a region. A region is composed of one or more availability domains.

As we selected Frankfurt region, it has maximum of 3 availability domain.

What is an availability Domain

Availability domains are isolated from each other, fault tolerant, and very unlikely to fail simultaneously. Because availability domains do not share infrastructure such as power or cooling, or the internal availability domain network, a failure at one availability domain within a region is unlikely to impact the availability of the others within the same region.

The availability domains within the same region are connected to each other by a low latency, high bandwidth network, which makes it possible for you to provide high-availability connectivity to the internet and on-premises, and to build replicated systems in multiple availability domains for both high-availability and disaster recovery.

What is a fault Domain?

A fault domain is a grouping of hardware and infrastructure within an availability domain. Each availability domain contains three fault domains. Fault domains let you distribute your instances so that they are not on the same physical hardware within a single availability domain. A hardware failure or Compute hardware maintenance that affects one fault domain does not affect instances in other fault domains. Every availability domain has 3 fault domains.

HA in project with best practices in Availability domains and Fault domains.

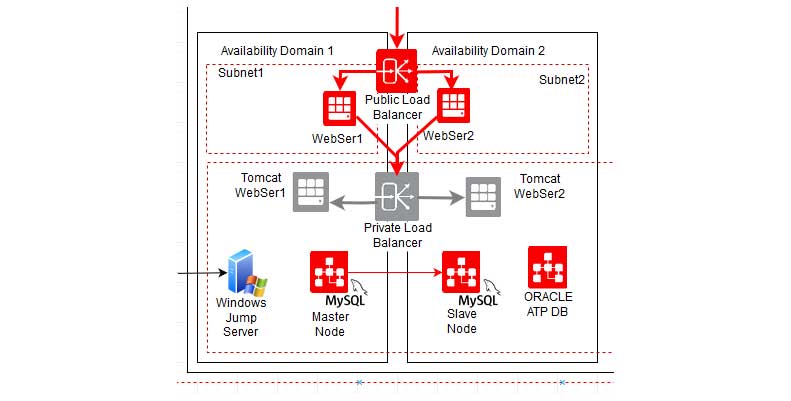

According to the above mentioned image, the web servers are distributed in between the availability domains.

- Webserver 1 to AD1 and Webserver 2 to AD2

- Backend Tomcat Webserver 1 to AD1 and Tomcat Webserver2 to AD2

- MySQL Master node to AD1 and MySQL Binlog replicated Slave to AD2

- Windows Jump server in Different fault domain in AD1

Subnets and Load Balancers in HA environment.

In this implementation we have used several number of subnets to make separate security rules and access limitations in security purposes.

- Public Subnets for Webservers with Public IP addresses binding.

- Private subnets with NAT gateways to machines with only management IP inside VCN

- Regional subnets to for Private Load balancer rules and security lists.

- Availability domain specific subnets to limit NAT gateway access and port access.

Public Load Balancer

A public load balancer is regional in scope. If your region includes multiple availability domains, a public load balancer requires either a regional subnet (recommended) or two availability domain-specific (AD-specific) subnets, each in a separate availability domain. With a regional subnet, the Load Balancing service creates a primary load balancer and a standby load balancer, each in a different availability domain, to ensure accessibility even during an availability domain outage. If you create a load balancer in two AD-specific subnets, one subnet hosts the primary load balancer and the other hosts a standby load balancer. If the primary load balancer fails, the public IP address switches to the secondary load balancer. The service treats the two load balancers as equivalent and you cannot specify which one is “primary”.

Private Load Balancer

To isolate your load balancer from the internet and simplify your security posture, you can create a private load balancer. The Load Balancing service assigns it a private IP address that serves as the entry point for incoming traffic.

When you create a private load balancer, the service requires only one subnet to host both the primary and standby load balancers. The load balancer can be regional or AD-specific, depending on the scope of the host subnet. The load balancer is accessible only from within the VCN that contains the host subnet, or as further restricted by your security rules.

The assigned floating private IP address is local to the host subnet. The primary and standby load balancers each require an extra private IP address from the host subnet.

Challenges faced in Autonomous DB implementation?

In configuring the Java Spring boot application to oracle ATP, some challenges which we faced are below mentioned.

- Connecting developer environments to ATP with Wallet file.

- Developers had to upgrade the SQL developer software to connect to new ATP environment with wallet file configuration.

- Java applications require Java Key Store (JKS) or Oracle wallets to connect to ATP or ADW.

- If you are using JDK11, JDK10, or JDK9 then you don’t need anything. If your JDK version is less than JDK8u162. Therefore had to upgrade the JDK versions also.

- Normal schema users needed specific grants specialized to ATP.

- Data migration had issues with the old oracle versions.

- Application secure access of the ATP wallet had configure for security and permissions for specific users and groups.

- Manual backups for Object storage buckets has issues with user permissions.